AI adoption is moving fast because it is easy to integrate. Securing it is harder because it changes how systems behave.

Nowadays, AI is everywhere. It is embedded in web applications, tools, browser extensions, and many other platforms. In web applications, it appears as chat interfaces, document processors, and copilots. Sometimes it is visible to the user. In many cases, it operates in the background as part of internal workflows.

Even when it looks simple, like a single input entry point, the backend often tells a different story. That “chatbot” may rely on multiple components such as retrieval systems, memory, external tools, and sometimes full agent chains that make decisions and take actions.

What looks like a single input is often an entire ecosystem.

This shift has created the need for a new type of security testing: agentic systems pentesting. Only recently has OWASP taken a leading role in this space by publishing the Top 10 Vulnerabilities for Agentic Systems. Despite this progress, teams are still struggling to determine how to properly test these systems.

In this blog, we will break this down and provide a clear way to approach it.

The Problem: One System, Two Different Testing Realities

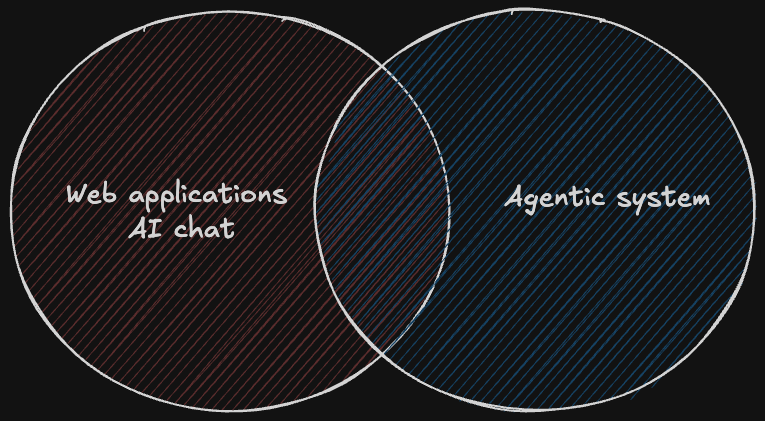

To better understand the difference, consider a company with a web application that includes an AI-enabled feature, such as a chat interface that allows users to perform actions on their accounts. The common approach in this case is to say, “We perform a web application pentest, and it also covers AI vulnerabilities.” This is true, but only partially. The chat interface is often just one part of a much larger system.

Behind that interface, agents may consume data from multiple sources, use tools and APIs, make decisions across multiple steps, and act on behalf of the user or the system. This behavior is not fully exposed through the web layer alone.

As a result, testing only the web application does not provide full coverage. This is why pentesting agentic systems has become necessary, not as an extension of traditional web testing, but as a separate effort. While there is some overlap, the approach, entry points, and risks are fundamentally different.

OWASP Top 10 for Agentic Systems: ASI01–ASI10 Explained

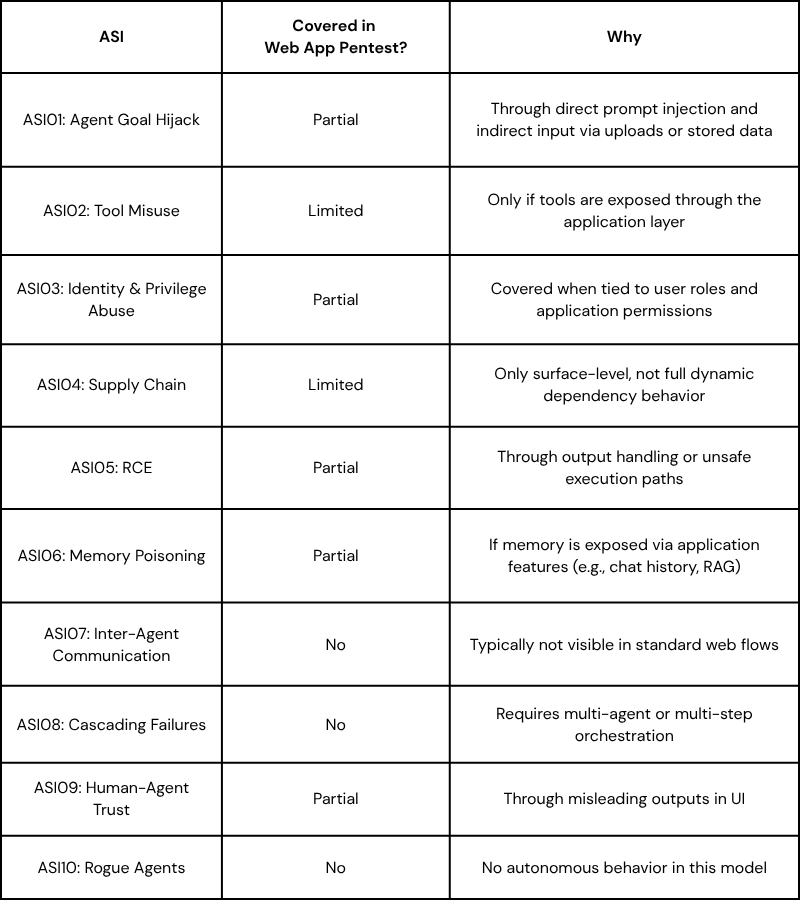

OWASP’s Top 10 for Agentic Systems defines the main security risks introduced when AI systems can act, use tools, and operate across multiple steps. Each item describes a different way these systems can be manipulated or fail.

ASI01: Agent Goal Hijack

Attackers manipulate the agent’s instructions or context to change its objective. Instead of completing the intended task, the agent is redirected to perform malicious or unintended actions.

ASI02: Tool Misuse and Exploitation

Agents use legitimate tools in unsafe ways. This can include deleting data, exfiltrating information, or triggering unintended workflows due to manipulated inputs or poor constraints.

ASI03: Identity and Privilege Abuse

Agents operate with inherited or excessive permissions. This allows attackers to escalate privileges or perform actions beyond what was originally intended.

ASI04: Agentic Supply Chain Vulnerabilities

Agents rely on external components such as tools, plugins, models, or data sources. If these are compromised or malicious, they can introduce hidden behavior or backdoors into the system.

ASI05: Unexpected Code Execution (RCE)

Agents generate or execute code as part of their operation. Attackers can exploit this to run arbitrary code, leading to system compromise.

ASI06: Memory and Context Poisoning

Attackers inject malicious data into the agent’s memory or knowledge sources. This persists over time and influences future decisions and actions.

ASI07: Insecure Inter-Agent Communication

Agents communicate with other agents or services. If this communication is not secured, it can be intercepted, spoofed, or manipulated.

ASI08: Cascading Failures

A single failure or malicious action propagates across multiple agents or systems, amplifying the impact and causing widespread disruption.

ASI09: Human-Agent Trust Exploitation

Users trust AI outputs and recommendations. Attackers exploit this trust to influence decisions, leading users to approve harmful actions.

ASI10: Rogue Agents

Agents deviate from their intended behavior and operate outside their defined scope. This can happen due to compromise, misalignment, or uncontrolled autonomy.

AI-Enabled Features in Web Applications

In many applications, AI is still implemented as a feature within a controlled flow. Common examples include a chatbot embedded in a product, a document summarization capability, or AI-assisted search and recommendations. In this setup, the application still defines the boundaries. The AI component receives input, processes it, and returns output within a predefined context. From a security perspective, this keeps the system relatively close to traditional web application behavior.

Within this scope, several OWASP AI risks are indeed relevant and can be covered as part of a web application pentest. These include prompt injection via direct user input or indirect input through uploaded files or stored data, unsafe output rendering, and data exposure in responses.

Testing focuses on how input reaches the model and how the application handles the output. The goal is to determine whether untrusted data can influence the model in unintended ways or cause leakage or injection issues in the application layer. At this stage, the mindset remains similar to classic web security, with additional considerations for how AI processes and returns data.

Agentic Systems: A Different Testing Reality

Agentic systems operate fundamentally differently from traditional AI-enabled features. In this model, AI is not just processing input. It is making decisions, chaining actions, and interacting with other systems. An agent can decide what steps to take, call external tools or APIs, retrieve and combine data from multiple sources, and persist context over time to influence future behavior.

As a result, the attack surface expands beyond direct user interaction. Entry points are no longer limited to a single request. They now include data stored in the system, external content retrieved at runtime, outputs from connected tools, and context shared between agents.

This introduces risks that do not exist in traditional application models. Attackers can influence behavior indirectly by manipulating data pipelines, abusing tool permissions, triggering multi-step attack chains, or exfiltrating data through the agent’s reasoning and actions. Testing in this environment is not about a single request and response. It requires understanding how the system behaves over time and how different components interact, then identifying ways to manipulate that behavior.

.png)

Why You Cannot Treat Them the Same

There is a natural tendency to treat agentic systems as just another feature of the application. In practice, this leads to gaps in coverage.

Testing the web layer alone will not reveal how an agent behaves when it receives manipulated data from internal sources, chains actions across multiple tools, or operates with broader permissions than intended. These behaviors fall outside the scope of a typical request-and-response model.

The difference is simple but critical. When AI is a feature, you test it like an input. When AI is an agent, you test it like a system that acts. Each requires a different testing approach, different assumptions, and a different understanding of risk.

AI Continuous Testing is What You Need to Secure Your AI Agents

These systems are changing rapidly - developers constantly integrate new tools, MCP servers, and data sources. Each addition introduces new potential attack surfaces.

This creates a familiar problem in a new context. Testing once per period is no longer enough. A newly connected tool or data source can immediately introduce risks such as data leakage or system compromise.

At Terra, we bring the same concept of continuous pentesting into the world of agentic AI systems. We identify new components, analyze their impact on the attack surface, and generate testing signals aligned with the OWASP Top 10 for agentic systems. When combined with an understanding of your business logic, these signals are tested in near-real time by our agents, allowing us to uncover real, context-aware vulnerabilities as they emerge.