Security teams have been sold a lie.

The pitch goes something like this: deploy our fully autonomous AI agents, let them run continuously across your production environments, and sleep soundly knowing your attack surface is covered. It sounds compelling until you're the security leader staring at 300 critical alerts on a Monday morning, praying an autonomous system didn’t break something in production while generating them. Your engineers are drowning in false positives. Legitimate vulnerabilities are buried under an avalanche of phantom findings. But the bigger risk isn't noise. It's handing the keys of your live environment to an AI that doesn't necessarily understand the difference between a test payload and a business-critical transaction.

This is the silent tax of pure automation, and it's costing organizations far more than they realize. For the largest enterprises, a single incident can spiral into the billions, as seen during the 2025 Jaguar Land Rover breach, which cost the company upwards of £2 billion. The gap between detection speed and breach cost is not a technical problem. It's a judgment problem.

At Terra Security, we built our platform around a fundamentally different premise: AI should augment human experts where they can’t be replaced and replace them where they’re inefficient, not replace them. That design principle, known as the human-in-the-loop model, changes everything about how security testing actually works.

The Problem With Fully Autonomous & No Oversight

The true future of Offensive Security can’t compromise on accuracy, depth, scale, and safety. As such, a fully autonomous approach is not yet possible. Automated pentesting tools had a place in any modern security program. But the industry has moved well beyond simple scanners. Today's frontier is fully autonomous AI agent platforms running across your production environment in real time.

That autonomy introduces a risk that accuracy metrics alone don't capture: the risk of damage. When it breaks something or leaks sensitive information, there's no human judgment in the loop to stop it. Speed and scale without accuracy are just organized noise. But the real cost of fully autonomous operation isn't the false positives. It's what happens when there are no guardrails on real findings.

Automated tools also have a structural blind spot: they don't fully understand your business. They can find misconfigurations and known CVEs, but they struggle to identify logic flaws, the kinds of vulnerabilities unique to your application's workflows. They're the vulnerabilities that cause real-world breaches, and they require a tester who understands intent, not just syntax.

What Human-in-the-Loop Actually Means

"Human-in-the-loop" is not a marketing term at Terra. It is an architectural decision baked into every engagement. Here's how it works in practice: each client target is assigned a credentialed, experienced security professional who owns the testing engagement. That human expert can be a Terra professional or a member of the client's own internal team.

The security landscape is shifting: many organizations are transforming their SOC function entirely, promoting their entire security operations staff into analyst and oversight roles rather than hands-on execution. In these cases, Terra's platform gives those internal analysts the AI-powered infrastructure to act as the human-in-the-loop themselves, combining deep institutional knowledge of their own environment with the scale and speed that agentic AI provides.

The human expert sits at the controls, approving intrusive attacks, guiding the agents through complex multi-step exploits, and, when needed, providing the creativity that only humans currently possess. This means that when Terra flags a critical vulnerability, it has already been verified. No false positives. No chasing ghosts. Just confirmed, exploitable findings with real-world impact and auto-remediation tailored to your unique architecture.

The model also inverts the traditional workload problem. In legacy manual pentesting, the expert spends a significant portion of their time on routine, automatable tasks such as reconnaissance, running standard tool suites, and writing report sections. With Terra, the AI handles all of that, freeing the human expert to focus entirely on the high-level work: interpreting unusual application behavior, probing business logic, and communicating risk in context.

"Terra's agentic pen-testing boosted our ROI by over 100%. Every dollar saved on repetitive tasks went straight into deeper, quality testing based on our business context." — Kyle Kurdziolek, VP Security, BigID

How Terra Compares to Other Approaches

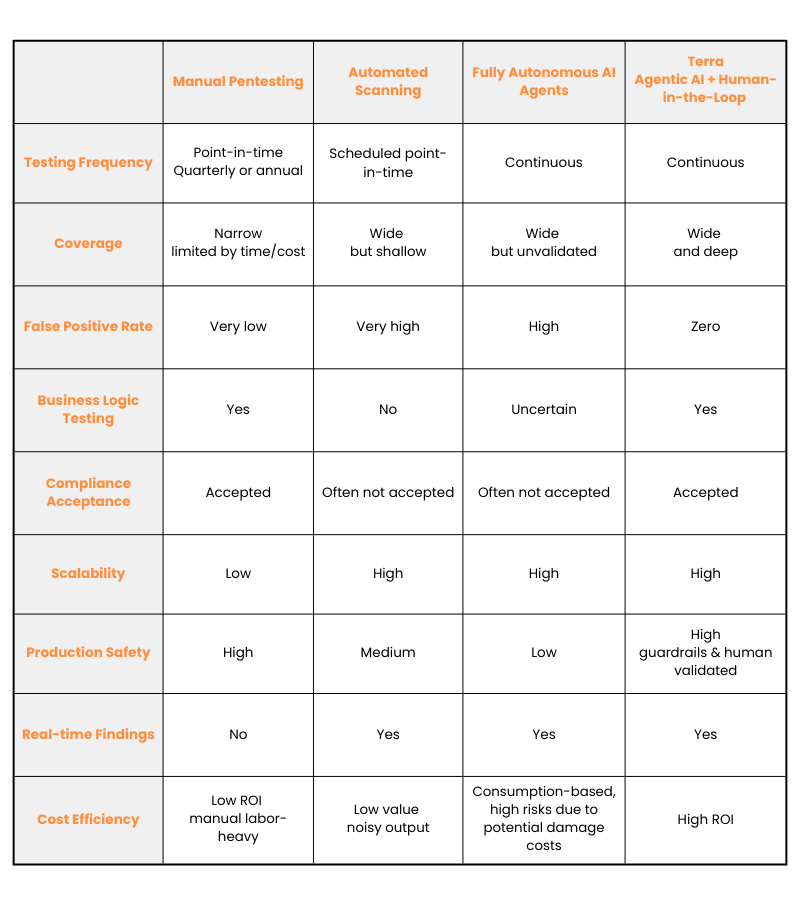

The security testing landscape offers three broad options, and each involves a different tradeoff between depth, scale, speed, and accuracy:

Traditional manual pentesting produces deep results but is slow, expensive, and point-in-time, meaning any vulnerability introduced between annual engagements goes undetected until the next cycle. Pure automation solves the frequency and scale problem but sacrifices accuracy and depth; automated scanners and pure agentic AI systems miss complex, multi-step attacks because they lack adversarial reasoning.

Terra's model is the only approach that achieves depth, accuracy, scale, and safety simultaneously because it uses each component (AI and human) for the tasks each is best suited for.

The Human-in-the-Loop’s Continuous Advantage

Another dimension where the human-in-the-loop model proves its value is continuity. Traditional pentests are point-in-time events. A report is delivered, findings are triaged, some get remediated, and then the cycle resets three or six months later. But software doesn't stand still. Modern engineering teams ship code daily, sometimes hourly. Each deployment is a potential change to the attack surface.

Terra's platform runs continuously, triggered by changes across your web apps, APIs, cloud infrastructure, and network. When a new feature goes live, the AI agents immediately test it. When a configuration changes, the system re-evaluates exposure. This is what makes compliance sustainable rather than stressful. Rather than scrambling to prepare evidence for a SOC 2 or ISO audit, Terra clients maintain a continuously updated, audit-ready record of their security posture, validated by certified human experts.

The Results Speak for Themselves

The proof of any security testing approach is not in its architecture. It's in its outcomes.

- A Fortune 100 Security Director reported that Terra doubled the web app attack surface tested without doubling costs or internal resources, while changing their testing model from “point in time” to “all the time”.

- Ben Hacmon, CISO at Perion Networks, noted that Terra delivered critical insights that other pentesters missed, made possible by the combination of AI depth and human business context.

- Yossi Yeshua, Riskified's CISO, highlighted how the combination of agentic AI and human oversight delivers the depth and scale a modern security organization needs while increasing accuracy and vulnerability validation.

These outcomes aren't coincidental. They are the direct result of a model where AI handles scale and speed, and humans bring judgment and creativity. Neither element works as well alone. Together, they produce something neither could achieve on their own.

The Bottom Line

The question for every security team isn't "should we use AI?" It's "how do we use AI and who is accountable for what the AI does?" Fully autonomous agent platforms are redefining that question in ways that go beyond missed findings.

- They introduce operational risk, potential production damage, and accountability gaps that no algorithm can resolve on its own.

- Pure automation shifts accountability to a system that doesn't understand your business, your risk tolerance, or your compliance obligations.

- Purely manual testing can't keep pace with modern development velocity. And as the risks are higher than ever, the demand for a model where human judgment governs AI action has never been greater.

Terra's human-in-the-loop model answers that accountability question directly: a named, expert human tester is responsible for every intrusive action. The AI makes them faster and more thorough. The human makes the results trustworthy and safe.

If you're ready to move from a checkbox exercise to a security program that actually reduces risk, click here to book a demo to see the Terra Platform in action.